It seems every day there’s a new concern or warning about artificial intelligence (“AI”)....

Just this week news broke that “the Godfather of AI,” as AI pioneer Geoffrey Hinton is often called, had left his job at Google, where he designed machine learning algorithms. The reason, as he explained on Twitter, was “so that I could talk about the dangers of AI without considering how this impacts Google.”

“Google,” he added, “has acted very responsibly.”

Google hasn’t just been a leader AI development, but perhaps its biggest booster... writing in its annual report that AI will “assist people and benefit society everywhere...”

But when it comes to society, there’s a dark side... and it’s happening fast.

And it’s not just Hinton who is concerned...

OpenAI co-founder Elon Musk has raised alarms, and just today the online education company Chegg watched its stock plummet after its CEO said..

In the first part of the year, we saw no noticeable impact from ChatGPT on our new account growth and we were meeting expectations on new sign-ups. However, since March we saw a significant spike in student interest in ChatGPT. We now believe it’s having an impact on our new customer growth rate.

But the only AI developer that appears to have sounded a siren of sorts about AI’s impact on society is Microsoft. It’s a warning none of its competitors has included even in their most recent quarterly filings... not Alphabet, not Amazon, Not Meta.

I first pointed this out two months ago, when ChatGPT and generative AI was still emerging.

But it’s worth repeating given everything that has happened in the supersonic span between then and now...

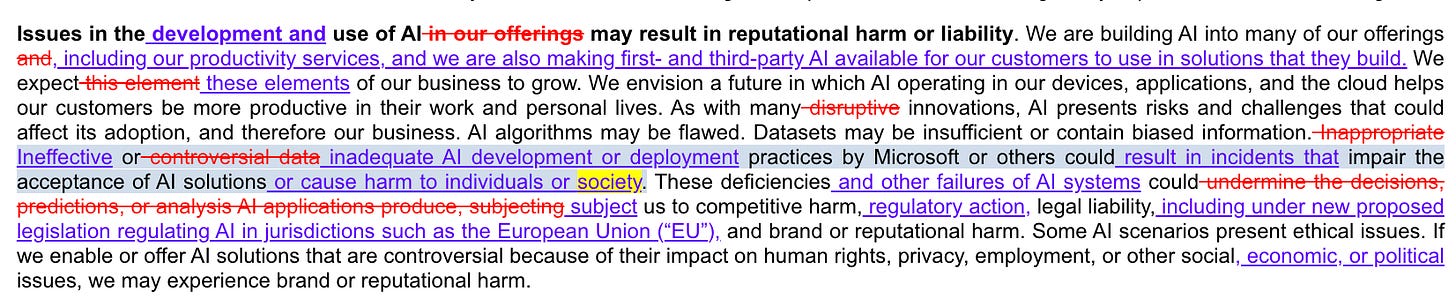

Starting with its July 10-K, months before OpenAI launched ChatGPT and before integrating ChatGPT into its revived Bing search engine, Microsoft tweaked the “risk factor” about how AI might “result in reputational harm or liability.”

Specifically, as you can see below, it added the line that AI could “result in incidents “ that “cause harm to individuals or society.”

The disclosure was unchanged in the subsequent first quarter 10-Q in October, but was altered again in January’s second quarter 10-Q – after the ChatGPT hoopla hit. As the snippet below shows, the mention of “society” was mentioned a second time, almost as if you missed it the first time... that it “may have broad impacts on society.”

Finally, in its most recent 10-Q, filed last month, while there were no new mentions of “society,” this time the company noted that the AI in question includes AI from “our strategic partner, OpenAI.”

I realize all of this is boilerplate, but I like to remind investors that boilerplates are there for a reason... and sometimes do become real risks.

Among them, as it relates to society, that AI could be coming after your job...

Look no further than IBM, whose CEO Arvind Krishna told Bloomberg yesterday that roughly 7,800 jobs, or 3% of its workforce, could be replaced by AI.

The news service quoted Krishna as saying those jobs would include “more mundane tasks such as providing employment verification letters or moving employees between departments.” But he also said that HR functions, such as evaluating workforce composition and productivity” will likely be phased out over the next decade.”

The real surprise, of course, will be if the very engineers who design AI wind up disrupting themselves out of work... and the software industry itself. I wrote as much in March, quoting my friend Paul Kedrosky and his partner Eric Norlin of SK Ventures as saying...

We have nothing against software engineers, and have invested in many brilliant ones.

We do think, however, that software cannot reach its fullest potential without escaping the shackles of the software industry, with its high costs, and, yes, relatively low productivity.

A software industry where anyone can write software, can do it for pennies, and can do it as easily as speaking or writing text, is a transformative moment.

It is an exaggeration, but only a modest one, to say that it is a kind of Gutenberg moment, one where previous barriers to creation – scholarly, creative, economic, etc. – are going to fall away, as people are freed to do things only limited by their imagination, or, more practically, by the old costs of producing software.

This will come with disruption, of course. Looking back at prior waves of change shows us it is not a smooth process, and can take years and even decades to sort through.

If we're right, then a dramatic reshaping of the employment landscape for software developers would be followed by a "productivity spike" that comes as the falling cost of software production meets the society-wide technical debt from underproducing software for decades.

The obvious question is why is all of this happening now, and not before the rollout of ChatGPT... and surge of interest in generative AI?

AI, after all, has been around for years.

As Paul puts it...

Before November people hadn't thought seriously about what current AI could do. Now every board is demanding companies rethink all staff counts on a zero-based level.

He adds...

Too many people think AI is coming for someone else. That is a narcissistic error.

Lots of that going around, these days.

Ideas? Tips? Thoughts? I can be reached at herbgreenberg@substack.com.

(I also write two investment newsletters for Empire Financial Research, Empire Real Wealth and Herb Greenberg’s Quant-X System. For more information, click here and here.)